This is the second blog post on charting the number of new R Packages over time.

Check out the first blog post that looked at getting the data, performing some simple graphing and then researching some issues that were identified using the graph.

In this blog post I will look at how you can aggregate the data, plot it, get a regression line, then plot it using ggplot2 and we will include a trend line using the geom_smooth.

1. Prepare the data

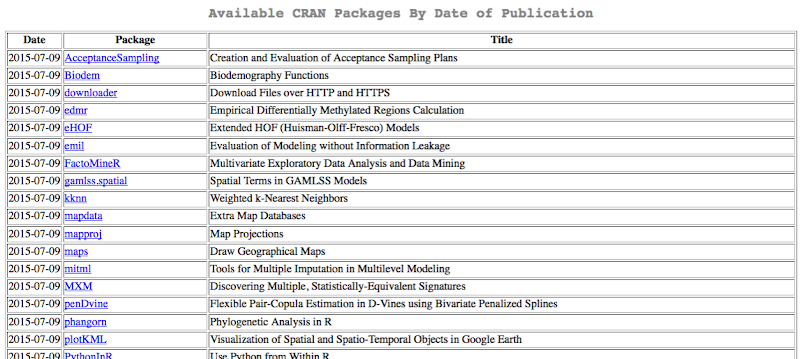

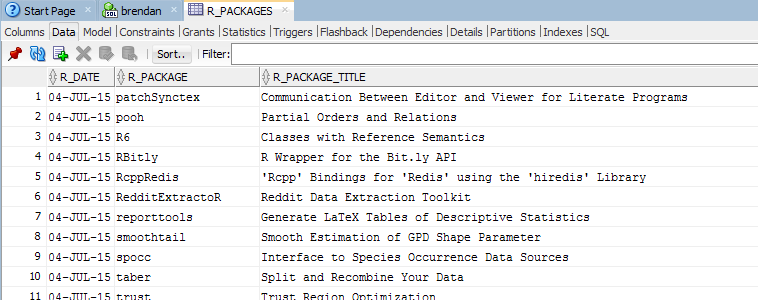

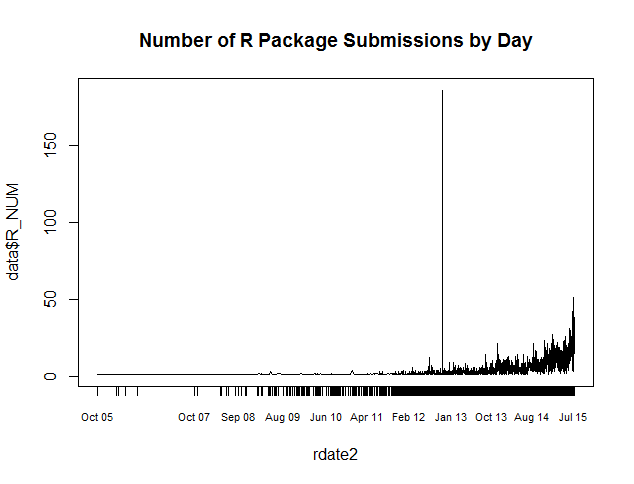

In my previous post we extracted and aggregated the data on a daily bases. This is the plot that was shown in my previous post. This gives us a very low level graph and perhaps we might get something a little bit more useable is we aggregated the data. I have the data in an Oracle Database so it would be easy for me to write another query to perform the necessary aggregation. But let's make things a little bit trickier. I'm going to use R to do the aggregation.

Our data set is in the data frame called data. What I want to do is to aggregate it up to monthly level. The first thing I did was to create a new column that contains the values of the new aggregate level.

data$R_MONTH <- format(rdate2, "%Y%m01")

data$R_MONTH <- as.Date(data$R_MONTH3, "%Y%m%d")

data.sum <- aggregate(x = data[c("R_NUM")],

FUN = sum,

by = list(Group.date = data$R_MONTH)

)

2. Plot the Data

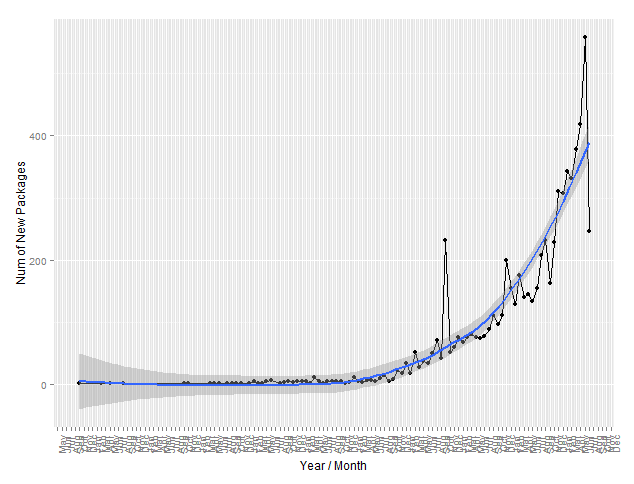

We now have the data aggregated at monthly level. We can now plot the graph. Ignore the last data point on the chart. This is for July 2015 and I extracted the data on the 9th of July. So we do not have a full months of data here.

plot(as.Date(data.sum$Group.date), data.sum$R_NUM, type="b", xaxt="n", cex=0.75 , ylab="Num New Packages", main="Number of New Packages by Month")

axis(1, as.Date(data.sum$Group.date, "%Y-%d"), as.Date(data.sum$Group.date, "%Y-%d"), cex.axis=0.5, las=1)

This gives us the following graph.

3. Plot the data using ggplot2

The basic plot function of R is great and allows us to quickly and easily get some good graphs produced. But it is a bit limited and perhaps we want to create something that is a bit more elaborate. ggplot2 is a very popular package that can allow us to create a graph, building it up in a number of steps and layers to give something that is a lot more professional.

In the following example I've kept things simple and Yes I could have done so much more. I'll leave that as an exercise for you go off an do.

The first step is to use the qplot function to produce a basic plot using ggplot2. This gives us something similar to what we got from the plot function.

library(ggplot2)

qplot(x=factor(data.sum$Group.date), y=data.sum$R_NUM, data=data.sum,

xlab="Year/Month", ylab='Num of New Packages', asp=0.5)

This gives us the following graph.

Now if we use ggplot2 then we need to specify a lot more information. Here is the equivalent plot using ggplot2 (with a line plot).

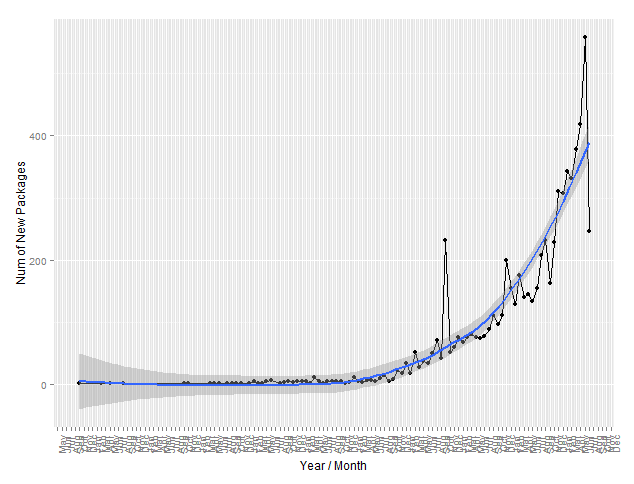

4. Include a trend line

We can very easily include a trend line in a ggplot2 graph using the geom_smooth command. In the following example we have the same chart and include a linear regression line.

plt <- ggplot(data.sum, aes(x=factor(data.sum$Group.date), y=data.sum$R_NUM)) + geom_line(aes(group=1)) +

theme(text = element_text(size=7),

axis.text.x = element_text(angle=90, vjust=1)) + xlab("Year / Month") + ylab("Num of New Packages") +

geom_smooth(method='lm', se=TRUE, size = 0.75, fullrange=TRUE, aes(group=20))

plt

We can tell a lot from this regression plot.

But perhaps we would like to see a trend line on the chart, with something like a moving averages plot. Plus I've added in a bit of scaling to help with representing the data at a monthly level.

library(scales)

plt <- ggplot(data.sum, aes(x=as.POSIXct(data.sum$Group.date), y=data.sum$R_NUM)) + geom_line() + geom_point() +

theme(text = element_text(size=12),

axis.text.x = element_text(angle=90, vjust=1)) + xlab("Year / Month") + ylab("Num of New Packages") +

geom_smooth(method='loess', se=TRUE, size = 0.75, fullrange=TRUE) +

scale_x_datetime(breaks = date_breaks("months"), labels = date_format("%b"))

plt

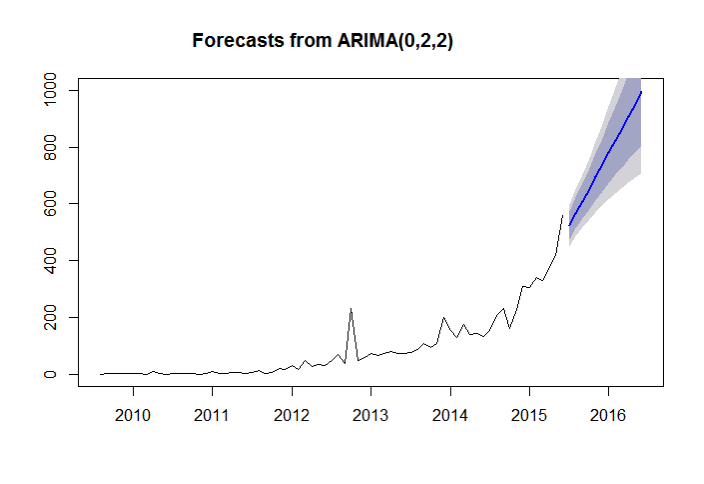

In the third blog post on this topic I will look at how we can use some of the forecasting and predicting functions available in R. We can use these to see help us visualize what the future growth patterns might be for this data. I have some interesting things to show.