A couple of weeks ago during the madness of Oracle Open World there was some new product releases and lots of updates to existing products.

One such product was SQL Developer. They released an Early Adopter version (EA1). This is where you can try out the new version of the product, but you need to be careful as it is not the GA/Production version. So it may have some "features".

One component of SQL Developer is the Oracle Data Miner tool. This tool GUI workflow based tool based on the Oracle Advanced Analytics option. At OOW we got to hear about the various new Oracle Data Mining features that are coming with Oracle 12.2 Database. For Oracle Data Miner (ODMr) 4.2 (EA) there are a lot of new features but most of these are hidden and will only come available when you are using the Oracle 12.2 DB.

But if you are using a 12.1 (or earlier) then there are some new features. I've been having a bit of a look around the EA1 release to see what is new and available to us now (while we wait for 12.2).

If you are on Oracle 12.1 DB or earlier there are two main new features. These are a new Workflow Scheduler and being able to specify in-memory options for ODMr objects. These can be easily found on the ODMr menu bar, are highlighted in the following image.

Let us now have a quick look at these.

ODMr Workflow Scheduler

The Workflow Scheduler allows us to take an ODMr Workflow and to use schedule it to run in the Oracle Database at a defined time or for a defined schedule. Previously we would have to write the SQL and PL/SQL code to enable the scheduling. Plus the ODMr schedule was outputted in a number of SQL scripts. So it was a little bit of challenge to get the workflow running on a regular basis.

Now with the new in-built ODMr Schedular we can quickly and easily do this without having to write a line of SQL or PL/SQL. The tool will look after the hard bit for us. We can schedule the entire workflow or certain parts of the workflow.

When setting up your schedule you can pick the Start Date, how frequently you would like it run (daily, weekly, monthly or some other custom frequency), when it should end (never, after X number of runs or on a specific date). You can also re-use an existing schedule.

For the advanced settings you can setup email notification, the job priority level, maximum run durations and limits, and timezone to use.

ODMr In-memory Options

To access the in-memory options you can click on the 'Performance Options' button on the ODMr menu or you can access it via the menu (Tools -> Preferences) to get the complete list of in-memory settings.

When you use ODMr to build your data mining workflows, ODMr will create a number of objects for each of the nodes of the workflow. These are typically created as tables in your schema. The previous version of ODMr introduced the Performance Options, where you could set the degree of parallel to use for some Nodes and the underlying SQL and PL/SQL code that is generated.

Now we can specify if the tables created should be in-memory, and available of the significant performance response times when you are using the data in these tables. This is particularly useful as we work with larger and larger data sets and we want our lighting fast response from some of our data mining tasks.

In addition to turning on the in-memory option for certain nodes, we can also specify the in-memory configuration settings such as the level of Columnar Compression to use and the Priority Level.

(I've been on the 12.2 beta so I've had a chance to try out many of the new features. There is some good stuff coming and I'll have blog posts about these when 12.2 comes GA)

Enter a title for the report, and accept the default settings

Enter a title for the report, and accept the default settings

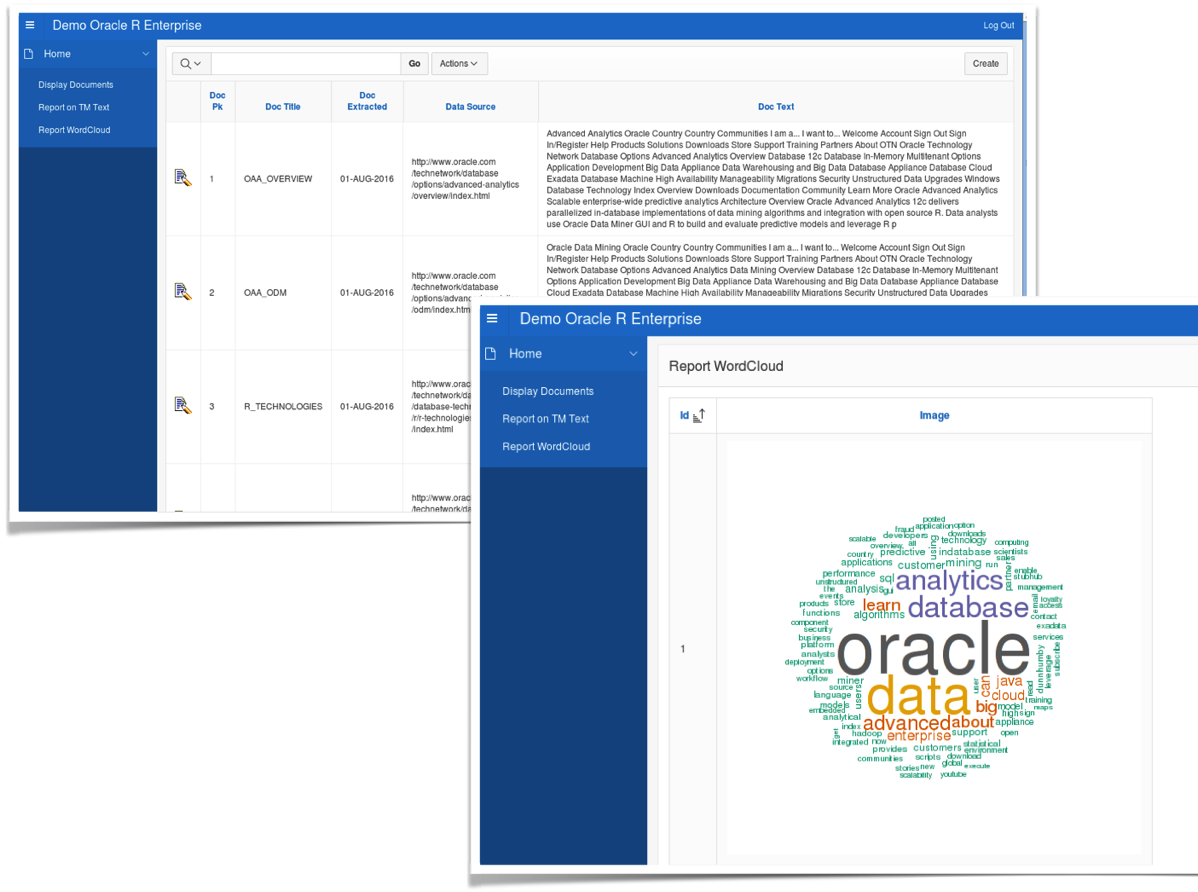

In this blog post I showed you how you use Oracle R Enterprise and the embedded R execution features of ORE to use the text from the webpages and to create a word cloud. This is a useful tool to be able to see visually what words can stand out most on your webpage and if the correct message is being put across to your customers.

In this blog post I showed you how you use Oracle R Enterprise and the embedded R execution features of ORE to use the text from the webpages and to create a word cloud. This is a useful tool to be able to see visually what words can stand out most on your webpage and if the correct message is being put across to your customers.