This is a the final part of a four part blog post on building and using Association Rules in the Oracle Database using Oracle Data Miner. The following outlines the contents of each post in the series on Association Rules

- This first part will focus on how to building an Association Rule model

- The second post will be on examining the Association Rules produced by ODM – This blog post

- The third post will focus on using the Association Rules on your data.

- The final post will look at how you can do some of the above steps using the ODM SQL and PL/SQL functions.

In my previous posts I showed how you can go about setting up for Association Rule analysis in Oracle Data Miner and how to examine the rules that are generated.

This post will focus on how we build and use association rules using the functionality that is available in SQL and PL/SQL.

Step 1 – Build the Settings Table

As with all Oracle Data Mining functions in SQL and PL/SQL you will need to setup or build a settings table. This table contains all the settings necessary to run the model build functions. It is a good idea to create a separate settings table for each model build that you complete.

CREATE TABLE assoc_sample_settings (

setting_name VARCHAR2(30),

setting_value VARCHAR2(4000));

Step 2 – Define the Settings for the Model

Before you go to generate your model you need to set some of the parameters for the algorithm. To start with you need to defined that we are going to generate an Association Rules model, turn off the Automatic Data Preparation.

We can also set 3 additional settings for Association Rules.

The ASSO_MIN_SUPPORT has a default of 0.1 or 10%. That means that only rules that exist in 10% or more of the cases will be generated. This is really a figure that is too high. In the code below we will set this to a 1%. This matches the settings that we used in SQL Developer in my previous posts.

BEGIN

INSERT INTO assoc_sample_settings (setting_name, setting_value) VALUES

(dbms_data_mining.algo_name, dbms_data_mining.ALGO_APRIORI_ASSOCIATION_RULES);

INSERT into assoc_sample_settings (setting_name, setting_value) VALUES

(dbms_data_mining.prep_auto, dbms_data_mining.prep_auto_off);

INSERT into assoc_sample_settings (setting_name, setting_value) VALUES

(dbms_data_mining.ODMS_ITEM_ID_COLUMN_NAME, ‘PROD_ID’);

INSERT into assoc_sample_settings (setting_name, setting_value) VALUES

(dbms_data_mining.ASSO_MIN_SUPPORT, 0.01);

COMMIT;

END;

/

Step 3 – Prepare the Data

In our example scenario we are using the SALE data that is part of the SH schema. The CREATE_MODEL function needs to have an attribute (CASE_ID) that identifies the key of the shopping basket. In our case we have two attributes, so we will need to use a combined key. This combined key consists of the CUST_ID and the TIME_ID. This links all the transaction records related to the one shopping event together.

We also just need the attribute that has the information that we need. In our Association Rules (Market Basket Analysis) scenario, we will need to include the PROD_ID attribute. This contains the product key of each product that was included in the basket

CREATE VIEW ASSOC_DATA_V AS (

SELECT RANK() OVER (ORDER BY CUST_ID, TIME_ID) CASE_ID,

t.PROD_ID

FROM SH.SALES t );

Step 4 – Create the Model

We will need to use the DBMS_DATA_MINING.CREATE_MODEL function. This will use the settings in our ASSOC_SAMPLE_SETTINGS table. We will use the view created in Step 3 above and use the CASE_ID attribute we created as the Case ID in the function all.

BEGIN

DBMS_DATA_MINING.CREATE_MODEL(

model_name => 'ASSOC_MODEL_2',

mining_function => DBMS_DATA_MINING.ASSOCIATION,

data_table_name => 'ASSOC_DATA_V',

case_id_column_name => ‘CASE_ID’,

target_column_name => null,

settings_table_name => 'assoc_sample_settings');

END;

On my laptop this took approximately 5 second to run on just over 918K records involving just over 143K cases or baskets.

Now that is quick!!!

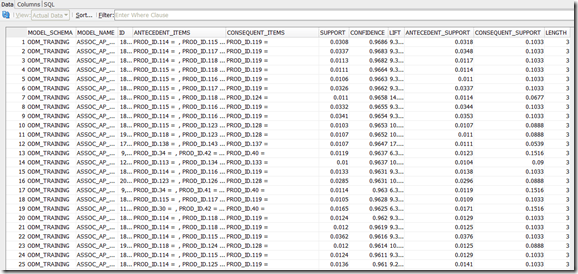

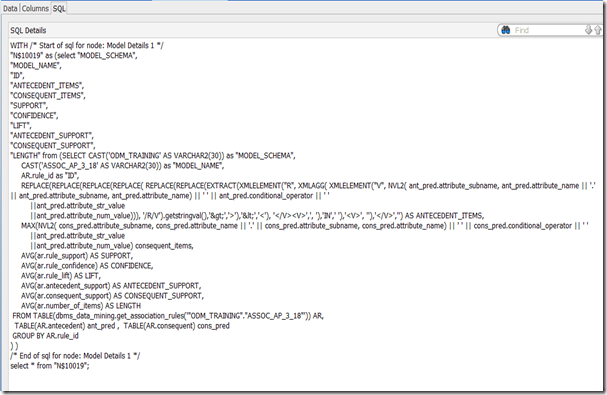

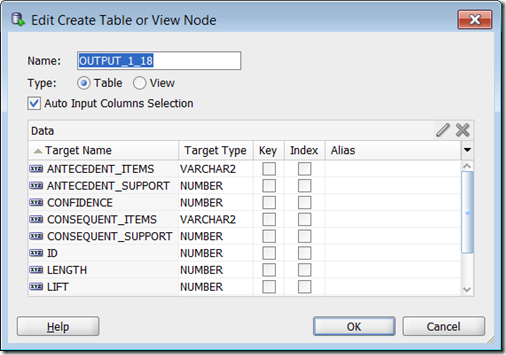

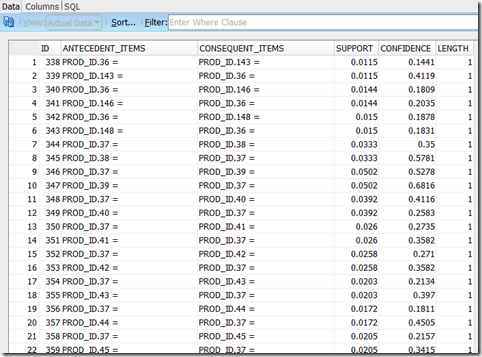

Step 5 – View the Model Outputs

There are a couple of functions that can be used to extract the rules produced in our previous step. These include:

GET_ASSOCIATION_RULES : This returns the rules from an association model.

SELECT rule_id,

antecedent,

consequent,

rule_support,

rule_confidence

FROM TABLE(DBMS_DATA_MINING.GET_ASSOCIATION_RULES('assoc_model_2', 10));

The 10 here returns the top 10 records or rules. GET_FREQUENT_ITEMSETS : returns a set of rows that represent the frequent item sets from an association model. In the following code we want the top 30 item sets to be returned, but filtered to only display item sets where there are 2 or more rules.

GET_FREQUENT_ITEMSETS : returns a set of rows that represent the frequent item sets from an association model. In the following code we want the top 30 item sets to be returned, but filtered to only display item sets where there are 2 or more rules.

SELECT itemset_id,

items,

support,

number_of_items

FROM TABLE(DBMS_DATA_MINING.GET_FREQUENT_ITEMSETS('assoc_model_2', 30))

WHERE number_of_items >= 2;