Tuesday, September 5, 2017

Monday, August 28, 2017

How to speed up your Oracle Data Mining with in-memory and parallel

Have you have found running a workflow in Oracle Data Miner slow or running the scripts in the database slow ?

No. Good, because I haven't found it slow.

But (there is always a but) it really depends on the volume of data your are dealing with. For the vast majority of us who aren't of the size of google, amazon, etc have data volumes that are not that large really and a basic server can process many millions of records extremely quickly using Oracle Data Mining.

But what if we have a large volume of data. In one recent project I had a data set containing over 3.5 billion records. Now that is big data. All of this data sitting in an Oracle Database.

So how can we process over 3.5 billion records in a couple of seconds, building 4 machine learning models in that time? Is that really possible with just using an Oracle Database? Yes is the answer and very easily. (Surely I needed Hadoop and Spark to process this data? Nope!)

The Oracle Data Miner (ODMr) tool comes with a new feature in SQL Developer 4 (and higer) that allows you to manage using Parallel execution and the in-memory DB features. These can be accessed on the ODMr Worksheet tool bar.

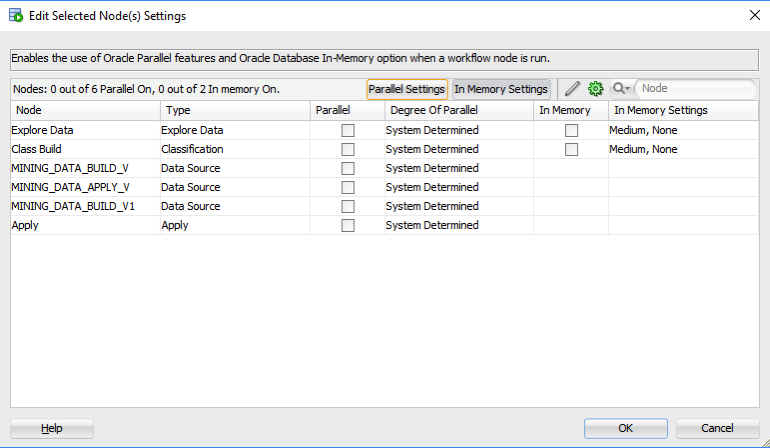

The best time to look at these setting is when you have created your workflow and are ready to run it for the first time. When you click on the 'Performance Options' link, you will get the following window. It will display the list of nodes you have in the workflow and will then indicate if the Degree of Parallel and the In-Memory options can be set for each of the nodes.

The default values are shown and you can changes these. For example, in a lot of scenarios you might prefer to leave the Degree of Parallel as System Determined. This will then use whatever the the default is for the database and controlled by the DBA, but if you want to specify a particular value then you can, for example setting the degree of parallel to 4 for the 'Class Build' node, in the above image. Similarly for the in-memory option, this will only be available for nodes where the in-memory option would be applicable. This will be where there is a lot of data processing (preparing data, transforming data, performing specific statistics, etc) and for storing any data that is generated by Oracle Data Mining.

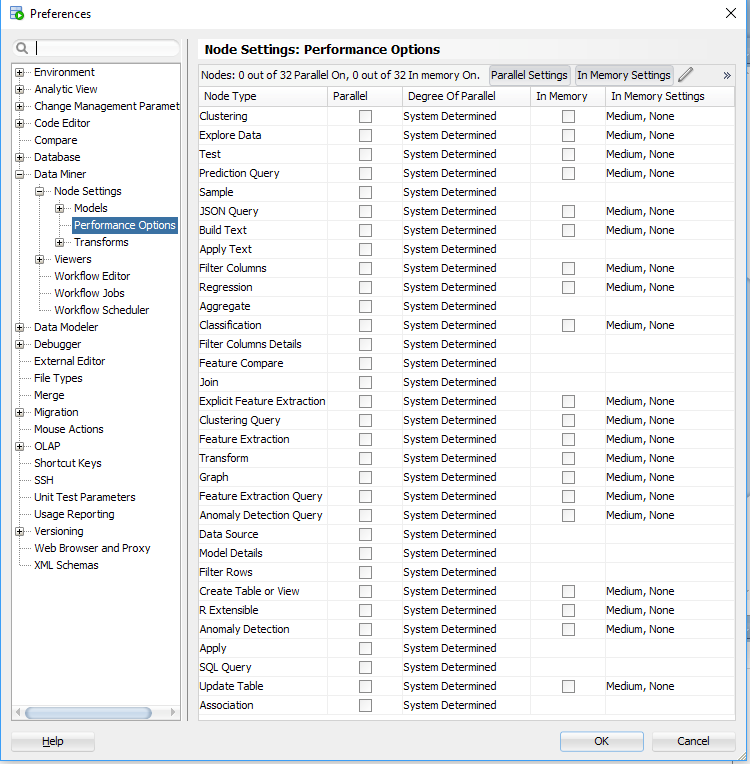

But what if you want to change the default values. You can change these at a global level within the SQL Developer Preferences. Here you can set the default to be used for each of the different types of Oracle Data Mining nodes.

I mentioned at the start that I've been able to build 4 machine learning models using Oracle Data Mining on a data set of over 3.5 billion records, all in a couple of seconds. In my scenario Parallel was set to 16 and we didn't use in-memory as we didn't have the licence for it. You can see that machine learning at lighting speed (ish) is possible. This timing is only for building the models, which is the step that consumes the most about of resources and time. When it comes to scoring the data, that is lighting fast. In may scenario, scoring over 300,000 was less than a second, and I didn't use parallel or anything else to speed things up. Because we didn't need to.

Go give it a try!

Monday, August 21, 2017

Scheduling ODMr Workflows in SQL Developer 4.2+

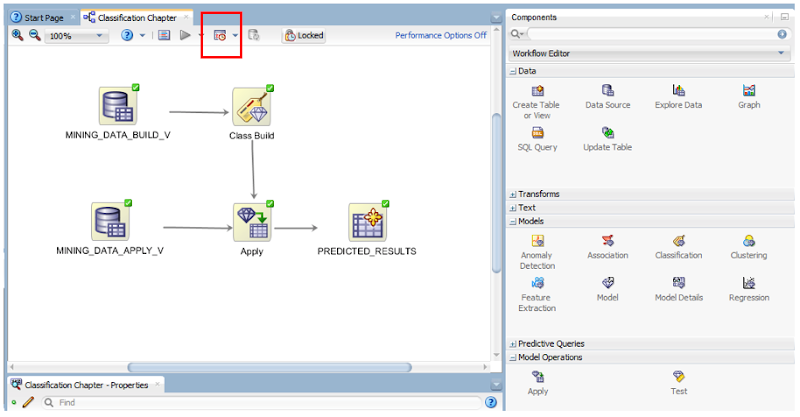

Just above the workflow worksheet we have a row of icon buttons. In the above image one of these is highlighted by a red box. This is the workflow scheduler. So go ahead on click on it.

Just above the workflow worksheet we have a row of icon buttons. In the above image one of these is highlighted by a red box. This is the workflow scheduler. So go ahead on click on it.

In most cases you will want to run the entire workflow. The default option presented to is 'All Nodes'. If you would only like a subset of the nodes to run, you can click-on or select the node in the workflow and then click on the scheduler icon. In our example we are going to run the entire workflow, so select 'All Nodes' from the menu.

In most cases you will want to run the entire workflow. The default option presented to is 'All Nodes'. If you would only like a subset of the nodes to run, you can click-on or select the node in the workflow and then click on the scheduler icon. In our example we are going to run the entire workflow, so select 'All Nodes' from the menu.

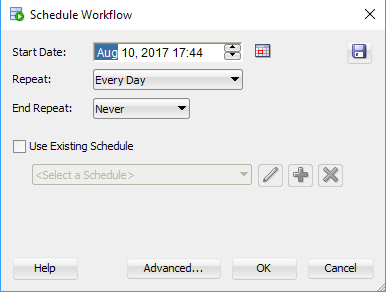

The main scheduler window will open. Here you can set the Start Date and time of the first run, what the Repeat frequency is (none, every day, every week or custom) and to End the Repeat (Never, After, On Date). To schedule a once off run of the workflow just set the Date and Time, set the Repeat to 'None' and End Repeat should disappear in this instance. If Repeat was set to another value then you can set a value for End Repeat.

Go ahead and run the scheduler by clicking on the OK button.

The main scheduler window will open. Here you can set the Start Date and time of the first run, what the Repeat frequency is (none, every day, every week or custom) and to End the Repeat (Never, After, On Date). To schedule a once off run of the workflow just set the Date and Time, set the Repeat to 'None' and End Repeat should disappear in this instance. If Repeat was set to another value then you can set a value for End Repeat.

Go ahead and run the scheduler by clicking on the OK button.

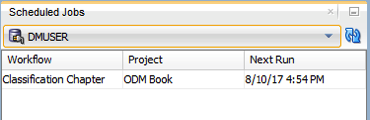

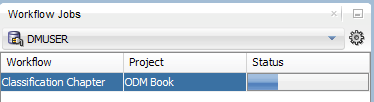

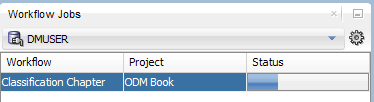

A Scheduled Jobs window should open that will display the details of the scheduled job. When this job is run in the database, this will be shown in the Workflow Jobs window. Here you can see and monitor the progress of the of the workflow.

A Scheduled Jobs window should open that will display the details of the scheduled job. When this job is run in the database, this will be shown in the Workflow Jobs window. Here you can see and monitor the progress of the of the workflow.

and that's it. Nice an simple.

But there is a something you needed to be WARNED about. When you schedule a workflow, Oracle Data Miner will lock the workflow. This is to ensure that no changes can be made to the scheduled workflow. This is indicated with the Locked button appearing on the icon menu. If you click on this button to unlock the workflow, it will also cancel your scheduled jobs associated with this workflow.

and that's it. Nice an simple.

But there is a something you needed to be WARNED about. When you schedule a workflow, Oracle Data Miner will lock the workflow. This is to ensure that no changes can be made to the scheduled workflow. This is indicated with the Locked button appearing on the icon menu. If you click on this button to unlock the workflow, it will also cancel your scheduled jobs associated with this workflow.

Also when the scheduled workflow is finished, the workflow will remain locked. So you will have to click on this Locked button to unlock the workflow.

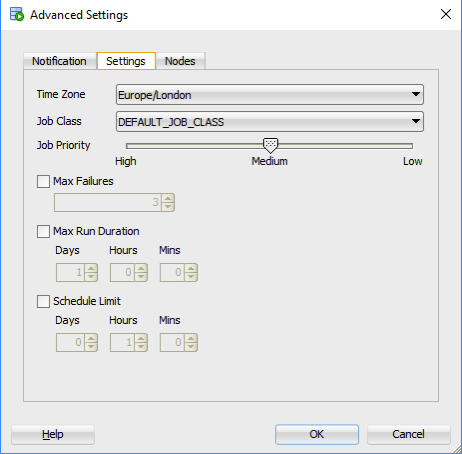

There are a few additional advanced features. These can be found by clicking on the 'Advanced...' button in the main scheduler window. The first table displayed allows you to specify if you want an email sent for the different stages of the scheduled job. The second tab allows you to set the Job Priority, Max Failures, Max Run Duration and Schedule Limits.

Also when the scheduled workflow is finished, the workflow will remain locked. So you will have to click on this Locked button to unlock the workflow.

There are a few additional advanced features. These can be found by clicking on the 'Advanced...' button in the main scheduler window. The first table displayed allows you to specify if you want an email sent for the different stages of the scheduled job. The second tab allows you to set the Job Priority, Max Failures, Max Run Duration and Schedule Limits.

Monday, August 7, 2017

Auto enabling APPROX_* function in the Oracle Database

With the releases of 12.1 and 12.2 of Oracle Database we have seen some new functions that perform approximate calculations. These include:

- APPROX_COUNT_DISTINCT

- APPROX_COUNT_DISTINCT_DETAIL

- APPROX_COUNT_DISTINCT_AGG

- APPROX_MEDIAN

- APPROX_PERCENTILE

- APPROX_PERCENTILE_DETAIL

- APPROX_PERCENTILE_AGG

These functions can be used when approximate answers can be used instead of the exact answer. Yes can have many scenarios for these and particularly as we move into the big data world, the ability to process our data quickly is slightly more important and exact numbers. For example, is there really a difference between 40% of our customers being of type X versus 41%. The real answer to this is, 'It Depends!', but for a lot of analytical and advanced analytical methods this difference doesn't really make a difference.

There are various reports of performance improvement of anything from 6x to 50x with the response times of the queries that are using these functions, instead of using the more traditional functions.

If you are a BI or big data analyst and you have build lots of code and queries using the more traditional functions. But what if you now want to use the newer functions. Does this mean you have go and modify all the code you have written over the years? you can imagine getting approval to do this!

The simple answer to this question is 'No'. No you don't have to change any code, but with some parameter changes for the DB or your session you can tell the database to automatically switch from using the traditional functions (count, etc) to the newer more optimised and significantly faster APPROX_* functions.

So how can you do this magic?

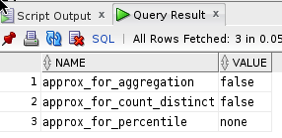

First let us see what the current settings values are:

SELECT name, value

FROM v$ses_optimizer_env

WHERE sid = sys_context('USERENV','SID')

AND name like '%approx%';

Now let us run a query to test what happens using the default settings (on a table I have with 10,500 records).

set auto trace on select count(distinct cust_id) from test_inmemory; COUNT(DISTINCTCUST_ID) ---------------------- 1500 Execution Plan ---------------------------------------------------------- Plan hash value: 2131129625 -------------------------------------------------------------------------------------- | Id | Operation | Name | Rows | Bytes | Cost (%CPU)| Time | -------------------------------------------------------------------------------------- | 0 | SELECT STATEMENT | | 1 | 13 | 70 (2)| 00:00:01 | | 1 | SORT AGGREGATE | | 1 | 13 | | | | 2 | VIEW | VW_DAG_0 | 1500 | 19500 | 70 (2)| 00:00:01 | | 3 | HASH GROUP BY | | 1500 | 7500 | 70 (2)| 00:00:01 | | 4 | TABLE ACCESS FULL| TEST_INMEMORY | 10500 | 52500 | 69 (0)| 00:00:01 | --------------------------------------------------------------------------------------

Let us now set the automatic usage of the APPROX_* function.

alter session set approx_for_aggregation = TRUE; SQL> select count(distinct cust_id) from test_inmemory; COUNT(DISTINCTCUST_ID) ---------------------- 1495 Execution Plan ---------------------------------------------------------- Plan hash value: 1029766195 --------------------------------------------------------------------------------------- | Id | Operation | Name | Rows | Bytes | Cost (%CPU)| Time | --------------------------------------------------------------------------------------- | 0 | SELECT STATEMENT | | 1 | 5 | 69 (0)| 00:00:01 | | 1 | SORT AGGREGATE APPROX| | 1 | 5 | | | | 2 | TABLE ACCESS FULL | TEST_INMEMORY | 10500 | 52500 | 69 (0)| 00:00:01 | ---------------------------------------------------------------------------------------

We can see above that the APPROX_* equivalent function was used, and slightly less work. But we only used this on a very small table.

The full list of session level settings is:alter session set approx_for_aggregation = TRUE; alter session set approx_for_aggregation = FALSE; alter session set approx_for_count_distinct = TRUE; alter session set approx_for_count_distinct = FALSE; alter session set approx_for_percentile = 'PERCENTILE_CONT DETERMINISTIC'; alter session set approx_for_percentile = PERCENTILE_DISC; alter session set approx_for_percentile = NONE;

Or at a system wide level:

alter system set approx_for_aggregation = TRUE; alter system set approx_for_aggregation = FALSE; alter system set approx_for_count_distinct = TRUE; alter system set approx_for_count_distinct = FALSE; alter system set approx_for_percentile = 'PERCENTILE_CONT DETERMINISTIC'; alter system set approx_for_percentile = PERCENTILE_DISC; alter system set approx_for_percentile = NONE;

And to reset back to the default settings:

alter system reset approx_for_aggregation; alter system reset approx_for_count_distinct; alter system reset approx_for_percentile;

Monday, July 31, 2017

Part 5 - The right to be forgotten (EU GDPR)s

This is the fifth part of series of blog posts on 'How the EU GDPR will affect the use of Machine Learning'

Article 17 is titled Right of Erasure (right to be forgotten) allows a person to obtain their data and for the data controller to ensure that the personal data is erased without any any delay.

This does not mean that their data can be flagged for non-contact, as I've seen done in many companies, only for the odd time when one of these people have been contacted.

It will also allow for people to choose to not take part in data profiling. Meaning that these people cannot be included in any of the input data sets. And should not be the scenario where they are included but they are flagged as not to be contacted in any post ML process where the consumers are contacted, just like I've seen in lots of places.

Click back to 'How the EU GDPR will affect the use of Machine Learning - Part 1' for links to all the blog posts in this series.

Monday, July 24, 2017

Part 4b - (Article 22: Profiling) Why me? and how Oracle 12c saves the day

This is the fourth part of series of blog posts on 'How the EU GDPR will affect the use of Machine Learning'

In this blog post (Part4b) I will examine some of the more technical aspects and how the in-database machine learning functions saves the day!

Probably in most cases where machine learning has been used and/or deployed in your company to analyse, profile and predict customers, it is more than likely that some sort of black box machine learning has been used.

Typical black box machine learning will include using algorithms like Neural Networks, but these can extended to other algorithms, within the context of the EU GDPR requirements, such as SVMs, GLM, etc. Additionally most companies don't just use one algorithm to make a decision on a customer. Many algorithms and rules based decision make can be used together, using some sort of voting system, to determine if a customer is targeted in a certain way.

Basically all of these do not really support the requirements of the EU GDPRs.

In most cases we need to go back to basics. Back to more simpler approaches of machine learning for customer profiling and prediction. This means no more, for now, ensemble models, unless you can explain why a customer was selected. This means having to use simple algorithms like Decision Trees, at a push Naive Bayes, and using some well defined rules based methods. All of these approaches allows us to see and understand why a customer was selected and based on Article 22 being able to explain why.

But there is some hope. Some of the commercial machine learning vendors already for some prediction insights built into their software. Very few if any open source solutions have this capability.

For example, Oracle introduced a new function called PREDICTION_DETAILS in Oracle 12.1c and this was expanded in Oracle 12.2c to cover all their in-database machine learning algorithms.

The following is an example of using this function for an SVM model. When you examine the boxes in the following image you an see that a slightly different set of attributes and the values of these attributes are listed. Each box corresponds to a different customer. This means we can give an explanation of why a customer was selected. Oracle 12c saves the day.

select cust_id,

prediction(clas_svm_1_27 using *) pred_value,

prediction_probability(clas_svm_1_27 using *) pred_prob,

prediction_details(clas_svm_1_27 using *) pred_details

from mining_data_apply_v;

If you have a look at other commercial machine learning solutions, you will find some give similar functionality or it will be available soon. Can we get the same level of detail from open source solutions. Not really unless you are using Decision Tress and maybe Naive Bayes. This means that companies that have gone done the pure open source for their machine learning may have to look at using alternative software and may have to folk out some hard earned dollars/euros to make sure that they are complainant with Article 22 of the EU GDPRs.

Click back to 'How the EU GDPR will affect the use of Machine Learning - Part 1' for links to all the blog posts in this series.

Monday, July 17, 2017

Part 4a - (Article 22: Profiling) Why me? and how Oracle 12c saves the day

This is the fourth part of series of blog posts on 'How the EU GDPR will affect the use of Machine Learning'

In this blog post (Part4a) I will discuss the specific issues relating to the use of machine learning algorithms and models. In the next blog post (Part 4a) I will examine some of the more technical aspects and how the in-database machine learning functions saves the day!

The EU GDPR has some rules that will affect the use of machine learning models for predicting customers.

As with all the other section of the EU GDPR, the use of machine learning and profiling of individuals does not affect organisations based in within Europe but affects all organisations around the globe who will be using these methods and associated data.

Article 22 of the EU GDPR deals with the “Automated individual decision-making, including profiling” and effectively creates a “right to explanation”. This means that an individual is entitled to an explanation of the decisions made by automated decision making models or profiling that has resulted in a decision being made about them. These new regulations present many challenges for organisations and their teams of data scientists.

To be able to give an explanation of the decision made by the machine learning models or by profile, requires the ability of the underlying models and their associated algorithms to be able to gives details of the model processing and how the decision about the individual has been obtained. For most machine learning models and algorithms this is generally not possible. For a limited set of algorithms, for example with decision trees, this is possible, but with other algorithms such as support vector machines, some regression models, and in particular neural networks, the ability to give these explanations is not possible. Some of these can be considered black box modelling (for neural networks) and grey box modelling for the others. But these algorithms are in widespread use in many organisations and are core to their predictive analytics solutions. This presents many challenges for organisations as they will need to look at alternative algorithms that many not have the same degree of predictive accuracy. With the recent rise of deep learning using neural networks, is extremely difficult to explain the multilayer neural net with various learned weights between each of the nodes at each layer.

Ensemble machine learning methods like Random Forests are also a challenge. Although the underlying machine learning algorithm is explainable, the ensemble approach of Random Forest, and other similar methods, result from an aggregation, averaging or voting process. Additionally, scenarios when machine learning models are combine with multiple other models, along with rules based solutions, where the predicted outcome is based on the aggregation or voting of all methods may no longer be useable. The ability to explain a predicted outcome using ensemble methods may not be possible and this will affect their continued use for predictive analytics.

In addition to the requirements of Article 22, Articles 13 and 14 state that the a person has a right to the meaningful information about the logic involved in profiling the person.

Over the past few years many of the commercially available machine learning solutions have been preparing for changes required to meet the EU GDPR. Some vendors have been able to add in greater model explanation features as well as specific explanations for each of the individual predictions. Many other vendors are will working on adding the required level of explanations and some of these many not be available in time for when the EU GDPR goes live in April 2018. This will present many challenges for organisations around the world who will be using data gathered within the EU region.

For machine learning based on open source languages and tools the EU GDPR present a very different challenge. While a small number of these come with some simple explanations for some of the more basic machine learning algorithms, there seems to be little information available on what work is currently being done to update these languages and tools. The limiting factor with making the required updates in the open source community lies with there being no commercial push to so. As a result of these limitation, many organisations may be forced into using commercial machine learning products, but for many other organisation the cost of doing so will be prohibitive.

It is clear that the tasks of building machine learning models have become significantly more complex with the introduction of the new EU GDPR. This complexity applies to the selection of what data can be used, ensuring there is no inherent discrimination in the machine learning models and the ability of these models to give an explanation of how the predicted outcome was determined. Companies around the World need to address these issues and in doing so may limit what software and algorithms that can be used for the customer profiling and predictive analytics. Although some of the commercially available machine learning languages and products can give the required insights, more product enhancements are required. Many challenges are facing machine learning open source community, with many research group only starting in recent months to look at how their languages, packages and tools can be enhanced to facilitate the requirements of the EU GDPR.

Click back to 'How the EU GDPR will affect the use of Machine Learning - Part 1' for links to all the blog posts in this series.

Wednesday, July 12, 2017

PRECIS R package

If you use R then you are very familiar with the SUMMARY function.

If you use R then you are very familiar with the name Hadley Wickham. He has produced some really cool packages for R.

He has produced a new R package and function that complements the commonly used SUMMARY R function.

The following outlines how you can install this new R package from GitHub (Hadley's GitHub is https://github.com/hadley/).

Install the R devtools package. This will allow you to download the package code from GitHub.

install.packages("devtools")

Install the package from Hadley's GitHub repository.

devtools::install_github("hadley/precis")

Load the library.

library(precis)

The following displays information produced by the SUMMARY and the PRECIS function.

> summary(mtcars)

mpg cyl disp hp drat wt

Min. :10.40 Min. :4.000 Min. : 71.1 Min. : 52.0 Min. :2.760 Min. :1.513

1st Qu.:15.43 1st Qu.:4.000 1st Qu.:120.8 1st Qu.: 96.5 1st Qu.:3.080 1st Qu.:2.581

Median :19.20 Median :6.000 Median :196.3 Median :123.0 Median :3.695 Median :3.325

Mean :20.09 Mean :6.188 Mean :230.7 Mean :146.7 Mean :3.597 Mean :3.217

3rd Qu.:22.80 3rd Qu.:8.000 3rd Qu.:326.0 3rd Qu.:180.0 3rd Qu.:3.920 3rd Qu.:3.610

Max. :33.90 Max. :8.000 Max. :472.0 Max. :335.0 Max. :4.930 Max. :5.424

qsec vs am gear carb

Min. :14.50 Min. :0.0000 Min. :0.0000 Min. :3.000 Min. :1.000

1st Qu.:16.89 1st Qu.:0.0000 1st Qu.:0.0000 1st Qu.:3.000 1st Qu.:2.000

Median :17.71 Median :0.0000 Median :0.0000 Median :4.000 Median :2.000

Mean :17.85 Mean :0.4375 Mean :0.4062 Mean :3.688 Mean :2.812

3rd Qu.:18.90 3rd Qu.:1.0000 3rd Qu.:1.0000 3rd Qu.:4.000 3rd Qu.:4.000

Max. :22.90 Max. :1.0000 Max. :1.0000 Max. :5.000 Max. :8.000

> precis(mtcars)

# data.frame [32 x 11]

name type precis

1 mpg dbl 10.4 [ 15.4 ( 19.2) 22.8] 33.9

2 cyl dbl 4 (11) 6 (7) 8 (14)

3 disp dbl 71.1 [121.0 (196.0) 334.0] 472.0

4 hp dbl 52 [ 96 ( 123) 180] 335

5 drat dbl 2.76 [ 3.08 ( 3.70) 3.92] 4.93

6 wt dbl 1.51 [ 2.54 ( 3.32) 3.65] 5.42

7 qsec dbl 14.5 [ 16.9 ( 17.7) 18.9] 22.9

8 vs dbl 0 (18) 1 (14)

9 am dbl 0 (19) 1 (13)

10 gear dbl 3 (15) 4 (12) 5 (5)

11 carb dbl 1 [ 2 ( 2) 4] 8

> precis(mtcars, histogram=TRUE)

# data.frame [32 x 11]

name type precis

1 mpg dbl 10.4 ▂▁▇▃▅▅▂▂▁▁▂▂ 33.9

2 cyl dbl 4 ▅▁▁▁▁▁▁▁▁▃▁▁▁▁▁▁▁▁▁▇ 8

3 disp dbl 71.1 ▅▁▁▃▇▂▁▁▁▁▃▁▃▁▅▁▁▁▁▁▁ 472.0

4 hp dbl 52 ▁▅▅▇▂▂▇▁▂▁▂▁▁▁▁ 335

5 drat dbl 2.76 ▂▂▇▂▁▅▇▃▂▁▁▁ 4.93

6 wt dbl 1.51 ▁▁▂▂▁▁▂▁▂▁▇▂▂▁▁▁▁▁▁▂▁ 5.42

7 qsec dbl 14.5 ▂▂▁▁▃▇▅▁▇▂▂▂▁▁▁▁▁ 22.9

8 vs dbl 0 ▇▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▅ 1

9 am dbl 0 ▇▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▁▅ 1

10 gear dbl 3 ▇▁▁▁▁▁▁▁▁▅▁▁▁▁▁▁▁▁▁▂ 5

11 carb dbl 1 ▅▇▁▂▁▇▁▁▁▁▁▁▁▁ 8

Monday, July 10, 2017

Part 3 - Ensuring there is no Discrimination in the Data and machine learning models

This is the third part of series of blog posts on 'How the EU GDPR will affect the use of Machine Learning'

The new EU GDPR has some new requirements that will affect what data can be used to ensure there is no discrimination. Additionally, the machine learning models needs to ensure that there is no discrimination with the predictions it will make. There is an underlying assumption that the organisation has the right to use the data about individuals and that this data has been legitimately obtained. The following outlines the areas relating to discrimination:- Discrimination based on unfair treatment of an individual based on using certain variables that may be inherently discriminatory. For example, race, gender, etc., and any decisions based on machine learning methods or not, that are based on an individual being part of one or more of these variables. This is particularly challenging for data scientists and it can limit some of the data points that can be included in their data sets.

- All data mining models need to tested to ensure that there is no discrimination built into them. Although the data scientist has removed any obvious variables that may cause discrimination, the machine learning models may have been able to discover some bias or discrimination based on the patterns it has discovered in the data.

- In the text preceding the EU GDPR (paragraph 71), details the requirements for data controllers to “implement appropriate technical and organizational measures” that “prevent, inter alia, discriminatory effects” based on sensitive data. Paragraph 71 and Article 22 paragraph 4 addresses discrimination based on profiling (using machine learning and other methods) that uses sensitive data. Care is needed to remove any associated correlated data.

- If one group of people are under represented in a training data set then, depending on the type of prediction being used, may unknowingly discriminate this group when it comes to making a prediction. The training data sets will need to be carefully partitioned and separate machine learning models built on each partition to ensure that such discrimination does not occur.

In the next blog post I will look at addressing the issues relating to Article 22 on the right to an explanation on outcomes automated individual decision-making, including profiling using machine learning and other methods.

Click back to 'How the EU GDPR will affect the use of Machine Learning - Part 1' for links to all the blog posts in this series.

Monday, July 3, 2017

Part 2 - Do I have permissions to use the data for data profiling?

This is the second part of series of blog posts on 'How the EU GDPR will affect the use of Machine Learning'

I have the data, so I can use it? Right?

I can do what I want with that data? Right? (sure the customer won't know!)

NO. The answer is No you cannot use the data unless you have been given the permission to use it for a particular task.

The GDPR applies to all companies worldwide that process personal data of European Union (EU) citizens. This means that any company that works with information relating to EU citizens will have to comply with the requirements of the GDPR, making it the first global data protection law.

The GDPR tightens the rules for obtaining valid consent to using personal information. Having the ability to prove valid consent for using personal information is likely to be one of the biggest challenges presented by the GDPR. Organisations need to ensure they use simple language when asking for consent to collect personal data, they need to be clear about how they will use the information, and they need to understand that silence or inactivity no longer constitutes consent.

You will need to investigate the small print of all the terms and conditions that your customers have signed. Then you need to examine what data you have, how and where it was collected or generated, and then determine if I have to use this data beyond what the original intention was. If there has been no mention of using the customer data (or any part of it) for analytics, profiling, or anything vaguely related to it then you cannot use the data. This could mean that you cannot use any data for your analytics and/or machine learning. This is a major problem. No data means no analytics and no targeting the customers with special offers, etc.

Data cannot be magically produced out of nowhere and it isn't the fault of the data science team if they have no data to use.

How can you over come this major stumbling block?

The first place is to review all the T&Cs. Identify what data can be used and what data cannot be used. One approach for data that cannot be used is to update the T&Cs and get the customers to agree to them. Yes they need to explicitly agree (or not) to them. Giving them a time limit to respond is not allowed. It needs to be explicit.

Yes this will be hard work. Yes this will take time. Yes it will affect what machine learning and analytics you can perform for some time. But the sooner you can identify these area, get the T&Cs updated, get the approval of the customers, the sooner the better and ideally all of this should be done way in advance on 25th May, 2018.

In the next blog post I will look at addressing Discrimination in the data and in the machine learning models.

Click back to 'How the EU GDPR will affect the use of Machine Learning - Part 1' for links to all the blog posts in this series.

Tuesday, June 27, 2017

How the EU GDPR will affect the use of Machine Learning - Part 1

On 5 December 2015, the European Parliament, the Council and the Commission reached agreement on the new data protection rules, establishing a modern and harmonised data protection framework across the EU. Then on 14th April 2016 the Regulations and Directives were adopted by the European Parliament.

The EU GDPR comes into effect on the 25th May, 2018.

Are you ready ?

The EU GDPR will affect every country around the World. As long as you capture and use/analyse data captured with the EU or by citizens in the EU then you have to comply with the EU GDPR.

Over the past few months we have seen a increase in the amount of blog posts, articles, presentations, conferences, seminars, etc being produced on how the EU GDPR will affect you. Basically if your company has not been working on implementing processes, procedures and ensuring they comply with the regulations then you a bit behind and a lot of work is ahead of you.

Like I said there was been a lot published and being talked about regarding the EU GDPR. Most of this is about the core aspects of the regulations on protecting and securing your data. But very little if anything is being discussed regarding the use of machine learning and customer profiling.

Do you use machine learning to profile, analyse and predict customers? Then the EU GDPRs affect you.

Article 22 of the EU GDPRs outlines some basic capabilities regarding machine learning, and in additionally Articles 13, 14, 19 and 21.

Over the coming weeks I will have the following blog posts. Each of these address a separate issue, within the EU GDPR, relating to the use of machine learning.

- Part 2 - Do I have permissions to use the data for data profiling?

- Part 3 - Ensuring there is no Discrimination in the Data and machine learning models.

- Part 4a - (Article 22: Profiling) Why me? and how Oracle 12c saves the day

- Part 4b - (Article 22: Profiling) Why me? and how Oracle 12c saves the day

- Part 5 - The right to be forgotten (EU GDPR)

Thursday, June 22, 2017

Installing Scala and Apache Spark on a Mac

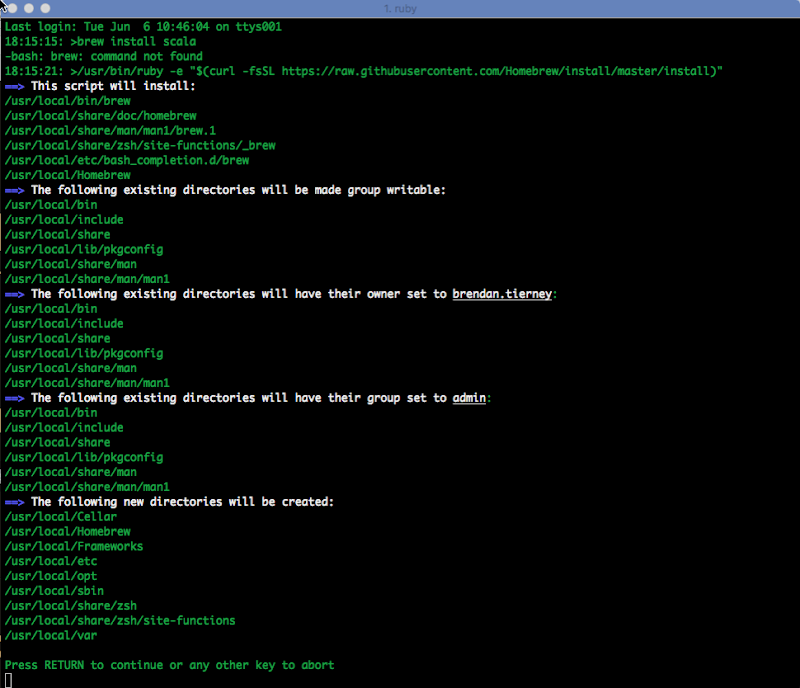

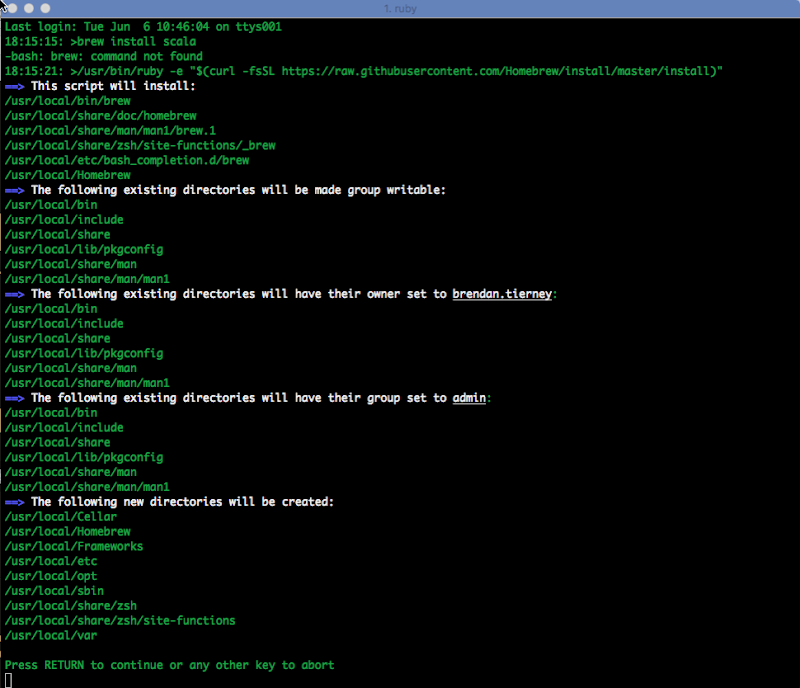

The following outlines the steps I've followed to get get Scala and Apache Spark installed on my Mac. This allows me to play with Apache Spark on my laptop (single node) before deploying my code to a multi-node cluster.

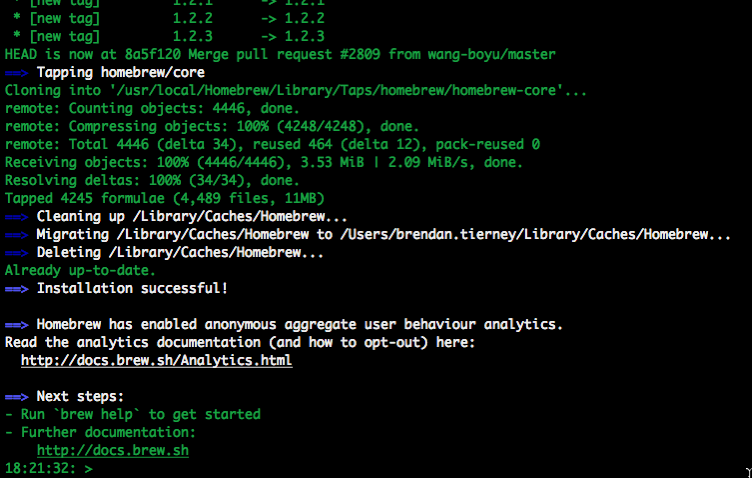

1. Install Homebrew

Homebrew seems to be the standard for installing anything on a Mac. To install Homebrew run/usr/bin/ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)"

When prompted enter your system/OS password to allow the install to proceed.

2. Install xcode-select (if needed)

You may have xcode-select already installed. This tool allows you to install the languages using command line.

xcode-select --install

If it already installed then nothing will happen and you will get the following message.

xcode-select: error: command line tools are already installed, use "Software Update" to install updates

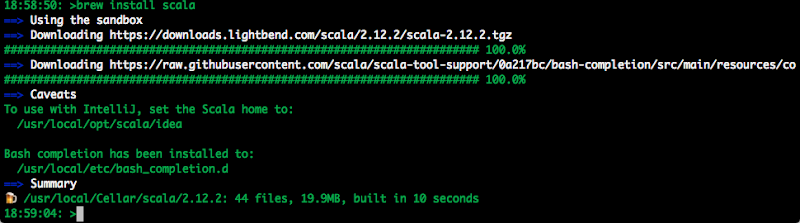

3. Install Scala

[If you haven't installed Java then you need to also do this.]

Use Homebrew to install scala.

brew install scala

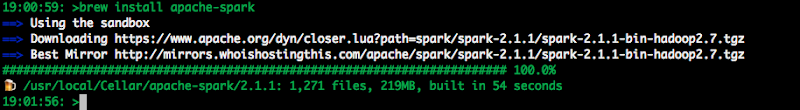

4. Install Apache Spark

Now to install Apache Spark.

brew install apache-spark

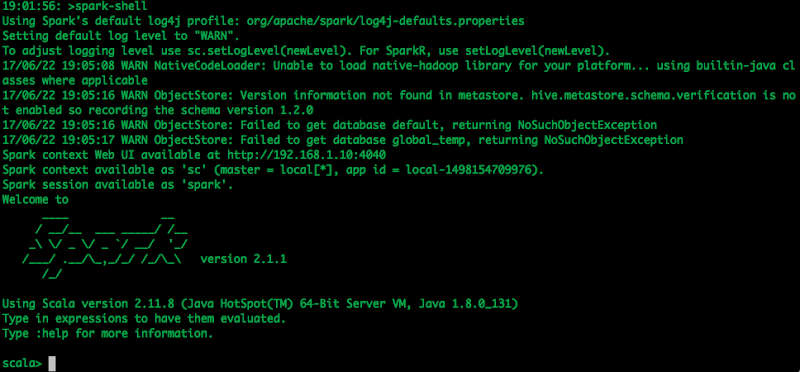

5. Start Spark

Now you can start the Apache Spark shell.

spark-shell

6. Hello-World and Reading a file

The traditional Hello-World example.

scala> val helloWorld = "Hello-World" helloWorld: String = Hello-World

or

scala> println("Hello World")

Hello World

What is my current working directory.

scala> val whereami = System.getProperty("user.dir")

whereami: String = /Users/brendan.tierney

Read and process a file.

scala> val lines = sc.textFile("docker_ora_db.txt")

lines: org.apache.spark.rdd.RDD[String] = docker_ora_db.txt MapPartitionsRDD[3] at textFile at :24

scala> lines.count()

res6: Long = 36

scala> lines.foreach(println)

####################################################################

## Specify the basic DB parameters

## Copyright(c) Oracle Corporation 1998,2016. All rights reserved.##

## ##

##------------------------------------------------------------------

## Docker OL7 db12c dat file ##

## ##

## db sid (name)

####################################################################

## default : ORCL

## cannot be longer than 8 characters

##------------------------------------------------------------------

...

There will be a lot more on how to use Spark and how to use Spark with Oracle (all their big data stuff) over the coming months.

[I've been busy for the past few months working on this stuff, EU GDPR issues relating to machine learning, and other things. I'll be sharing some what I've been working on and learning in blog posts over the coming weeks]