In this 5th blog post in my series on using the capabilities of Oracle Text, Oracle R Enterprise and Oracle Data Mining to process documents and text, I will have a look at some of the machine learning features of Oracle Text.

Oracle Text comes with a number of machine learning algorithms. These can be divided into two types. The first is called 'Supervised Learning' where we have two machine learning algorithms for classification type of problem. The second type is called 'Unsupervised Learning' where we have the ability to use clustering machine learning algorithms to look for patterns in our text documents and to find similarities between documents based on their contents.

It is this second type of document clustering that I will work through in this blog post.

When using clustering with text documents, the machine learning algorithm will look for patterns that are common between the documents. These patterns will include the words used, the frequency of the words, the position or ordering of these words, the co-occurance of words, etc. Yes this is a large an complex task and that is why we need a machine learning algorithm to help us.

With Oracle Text we only have one clustering machine learning algorithm available to use. When we move onto using the Oracle Advanced Analytics Option (Oracle Data Mining and Oracle R Enterprise) we more algorithms available to us.

With Oracle Text the clustering algorithm is called k-Means. In a way the actual algorithm is unimportant as it is the only one available to us when using Oracle Text. To use this algorithm we have the CTX_CLS.CLUSTERING procedure. This procedure takes the documents we want to compare and will then identify the clusters (using hierarchical clustering) and will then tells us, for each document, what clusters the documents belong to and they probability value. With clustering a document (or a record) can belong to many clusters. Typically in the text books we see clusters that are very distinct and are clearly separated from each other. When you work on real data this is never the case. We will have many over lapping clusters and a data point/record can belong to one or more clusters. This is why we need the probability vale. We can use this to determine what cluster our record belongs to most and what other clusters it is associated with.

Using the example documents that I have been using during this series of blog posts we can use the CTX_CLS.CLUSTERING algorithm to cluster and identify similarities in these documents.

We need to setup the parameters that will be used by the CTX_CLS.CLUSTERING procedure. Tell it to use the k-Means algorithm and then the number of clusters to generate. As with all Oracle Text procedures or algorithms there are a number of settings you can configure or you can just accept the default values.

exec ctx_ddl.drop_preference('Cluster_My_Documents');

exec ctx_ddl.create_preference('Cluster_My_Documents','KMEAN_CLUSTERING');

exec ctx_ddl.set_attribute('Cluster_My_Documents','CLUSTER_NUM','3');

The code above is an example of the basics of what you need to setup for clustering. Other attribute or cluster parameter setting available to you include, MAX_DOCTERMS, MAX_FEATURES, THEME_ON, TOKEN_ON, STEM_ON, MEMORY_SIZE and SECTION_WEIGHT.

Now we can run the CTX_CLS.CLUSTERING procedure on our documents. This procedure has the following parameters.

- The Oracle Text Index Name

- Document Id Column Name

- Document Assignment (cluster assignment) Table Name. This table will be created if it doesn't already exist

- Cluster Description Table Name. This table will be created if it doesn't already exist.

- Name of the Oracle Text Preference (list)

exec ctx_cls.clustering(

'MY_DOCUMENTS_OT_IDX',

'DOC_PK',

'OT_CLUSTER_RESULTS',

'DOC_CLUSTER_DETAILS',

'Cluster_My_Documents');

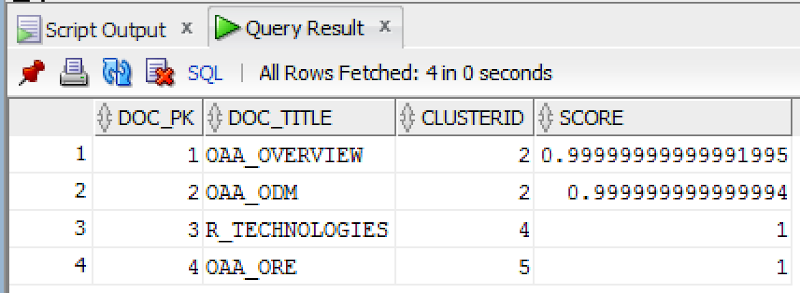

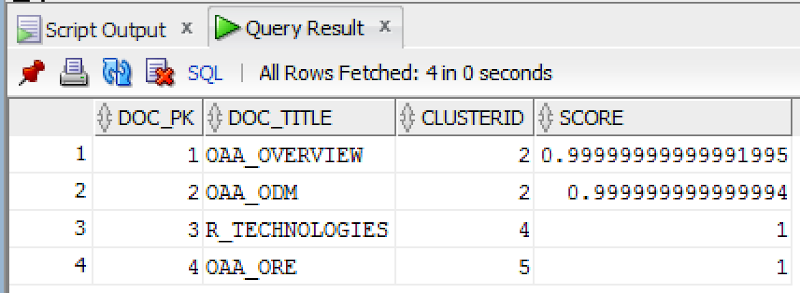

When the procedure has completed we can now examine the OT_CLUSTER_RESULTS and the DOC_CLUSTER_DETAILS tables. The first of these (OT_CLUSTER_RESULTS) allows us to see what documents have been clustered together. The following is what was produced for my documents.

SELECT d.doc_pk,

d.doc_title,

r.clusterid,

r.score

FROM my_documents d,

ot_cluster_results r

WHERE d.doc_pk = r.docid;

We can see that two of the documents have been grouped into the same cluster (ClusterId=2). If you have a look back at what these documents are about then you can see that yes these are very similar. For the other two documents we can see that they have been clustered into separate clusters (ClusterId=4 & 5). The clustering algorithms have said that they are different types of documents. Again when you examine these documents you will see that they are talking about different topics. So the clustering process worked !

You can also explore the various features of the clusters by looking that he DOC_CLUSTER_DETAILS table. Although the details in this table are not overly useful but it will give you some insight into what clusters the k-Means algorithm has produced.

Hopefully I've shown you how easy it is to setup and use the clustering feature of Oracle Text.

WARNING: Before using the Clustering or Classification with Oracle Text, you need to check with your local Oracle Sales representative about if there is licence implication. There seems to be some mentions the the algorithms used are those that come with Oracle Data Mining. Oracle Data Mining is a licence cost option for the database. So make sure you check before you go using these features.