To add to the advanced insights that you can get from using ODM, you can combine ODM with your OBIEE dashboards to gain a deeper level of insight of your data. This is the combining of data mining techniques and visualization techniques.

The purpose of this blog post is to show you the steps involved in adding an ODM model to your OBIEE dashboards. Lots of people have been asking for the details of how to do it, so here it is.

The following example is based on a presentation that I have given a few times (OUG Ireland, UKOUG, OOW) with Antony Heljula.

1. Export & Import the ODM model

If your data mining analysis and development was completed in a different database to where your OBIEE data resides then you will need to move the ODM model from ODM/development database to the OBIEE database.

ODM provides two PL/SQL procedures to allow you to easily move your ODM model. These procedures are part of the DBMS_DATA_MINING package. To export a model you will need to use the DBMS_DATA_MINING.EXPORT_MODEL procedure. Similarly to import your (exported) ODM model you will use the DBMS_DATA_MINING.IMPORT_MODEL procedure.

2. Create a view that uses the ODM model

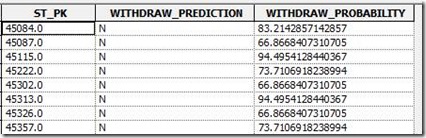

You can create a view that uses the PREDICTION and PREDICTION_PROBABILITY functions to apply the import ODM model to your data. For example the following view is used to score our customer data to make a prediction of they are going to churn and the probability that this prediction is correct.

SELECT st_pk,

prediction(clas_decision_tree using *) WITHDRAW_PREDICTION,

prediction_probability(clas_decision_tree using *) WITHDRAW_PROBABILITY

FROM CUSTOMER_DATA;

3. Import the view into the Physical layer of the BI Repository (RPD)

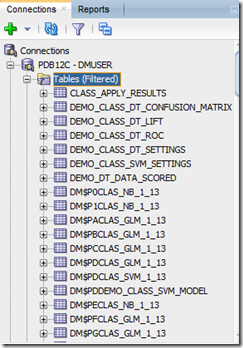

The view was then imported into the Physical layer of the BI Repository (RPD) where it was joined on primary key to the other customer tables (we had one records per customer in the view). With the tables being joined, we can use the prediction columns to filter the customer data. For example filter all the customer who are likely to churn, WITHDRAW_PREDICTION = ‘N’

![clip_image002[11] clip_image002[11]](http://lh4.ggpht.com/-MHDHjr06HXs/Ujrpgd60ebI/AAAAAAAAK0s/bKfCmJ0Ajqc/clip_image002%25255B11%25255D_thumb.jpg?imgmax=800)

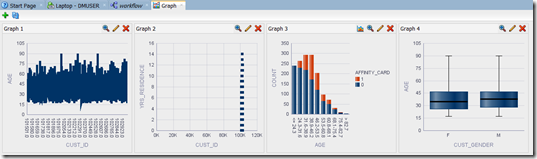

![clip_image002[13] clip_image002[13]](http://lh5.ggpht.com/-8KxEpH3u3Zk/Ujrpiuv4C3I/AAAAAAAAK08/v_85EJk_SVg/clip_image002%25255B13%25255D_thumb%25255B1%25255D.jpg?imgmax=800)

4.Add the new columns to the Business Model layer

The new prediction columns were then mapped into the Business Model layer where they could be incorporated into various relevant calculations e.g. % Withdrawals Predicted, and then subsequently presented to the end users for reporting

![clip_image002[9] clip_image002[9]](http://lh6.ggpht.com/-DYlJTyDS0W0/Ujrpk4VwBYI/AAAAAAAAK1M/dHMA_4soYQM/clip_image002%25255B9%25255D_thumb.jpg?imgmax=800)

5. Add to your Dashboards

The Withdraw prediction columns could then be published on the BI Dashboards where they could be used to filter the data content. In the example below, the use has chosen to show data for only those customers who are predicted to Withdraw with a probability rating of >70%

![clip_image002[5] clip_image002[5]](http://lh4.ggpht.com/-YUOYkOK_SK0/UjrpnBmNOII/AAAAAAAAK1c/AgC69FJb6x4/clip_image002%25255B5%25255D_thumb%25255B1%25255D.jpg?imgmax=800)