A new feature for Oracle Data Mining in Oracle 12.2 is the new Model Details views.

In Oracle 11.2.0.3 and up to Oracle 12.1 you needed to use a range of PL/SQL functions (in DBMS_DATA_MINING package) to inspect the details of a data mining/machine learning model using SQL.

Check out these previous blog posts for some examples of how to use and extract model details in Oracle 12.1 and earlier versions of the database

Association Rules in ODM-Part 3

Extracting the rules from an ODM Decision Tree model

Instead of these functions there are now a lot of DB views available to inspect the details of a model. The following table summarises these various DB Views. Check out the DB views I've listed after the table, as these views might some some of the ones you might end up using most often.

I've now chance of remembering all of these and this table is a quick reference for me to find the DB views I need to use. The naming method used is very confusing but I'm sure in time I'll get the hang of them.

NOTE: For the DB Views I've listed in the following table, you will need to append the name of the ODM model to the view prefix that is listed in the table.

| Data Mining Type | Algorithm & Model Details | 12.2 DB View | Description |

|---|---|---|---|

| Association | Association Rules | DM$VR | generated rules for Association Rules |

| Frequent Itemsets | DM$VI | describes the frequent itemsets | |

| Transaction Itemsets | DM$VT | describes the transactional itemsets view | |

| Transactional Rules | DM$VA | describes the transactional rule view and transactional itemsets | |

| Classification | (General views for Classification models) | DM$VT DM$VC |

describes the target distribution for Classification models describes the scoring cost matrix for Classification models |

| Decision Tree | DM$VP DM$VI DM$VO DM$VM |

describes the DT hierarchy & the split info for each level in DT describes the statistics associated with individual tree nodes Higher level node description describes the cost matrix used by the Decision Tree build |

|

| Generalized Linear Model | DM$VD DM$VA |

describes model info for Linear Regres & Logistic Regres describes row level info for Linear Regres & Logistic Regres |

|

| Naive Bayes | DM$VP DM$VV |

describes the priors of the targets for Naïve Bayes describes the conditional probabilities of Naïve Bayes model |

|

| Support Vector Machine | DM$VL | describes the coefficients of a linear SVM algorithm | |

| Regression ??? | Doe | 80 | 50 |

| Clustering | (General views for Clustering models) | DM$VD DM$VA DM$VH DM$VR |

Cluster model description Cluster attribute statistics Cluster historgram statistics Cluster Rule statistics |

| k-Means | DM$VD DM$VA DM$VH DM$VR |

k-Means model description k-Means attribute statistics k-Means historgram statistics k-Means Rule statistics |

|

| O-Cluster | DM$VD DM$VA DM$VH DM$VR |

O-Cluster model description O-Cluster attribute statistics O-Cluster historgram statistics O-Cluster Rule statistics |

|

| Expectation Minimization | DM$VO DM$VB DM$VI DM$VF DM$VM DM$VP |

describes the EM components the pairwise Kullback–Leibler divergence attribute ranking similar to that of Attribute Importance parameters of multi-valued Bernoulli distributions mean & variance parameters for attributes by Gaussian distribution the coefficients used by random projections to map nested columns to a lower dimensional space |

|

| Feature Extraction | Non-negative Matrix Factorization | DM$VE DM$VI |

Encoding (H) of a NNMF model H inverse matrix for NNMF model |

| Singular Value Decomposition | DM$VE DM$VV DM$VU |

Associated PCA information for both classes of models describes the right-singular vectors of SVD model describes the left-singular vectors of a SVD model |

|

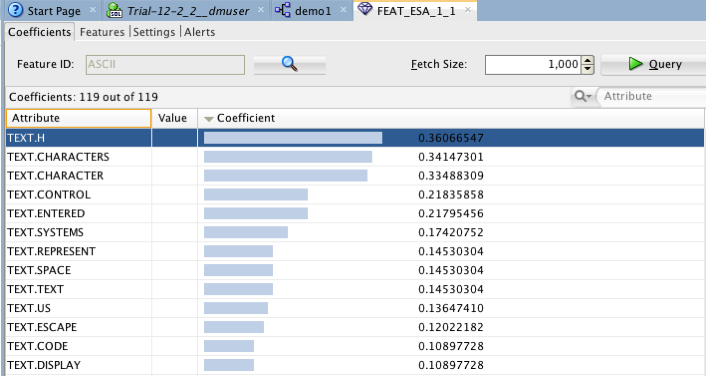

| Explicit Semantic Analysis | DM$VA DM$VF |

ESA attribute statistics ESA model features |

|

| Feature Section | Minimum Description Length | DM$VA | describes the Attribute Importance as well as the Attribute Importance rank |

Normalizing and Error Handling views created by ODM Automatic Data Processing (ADP)

- DM$VN : Normalization and Missing Value Handling

- DM$VB : Binning

Global Model Views

- DM$VG : Model global statistics

- DM$VS : Computed model settings

- DM$VW :Alerts issued during model creation

Each one of these new DB views needs their own blog post to explain what informations is being explained in each. I'm sure over time I will get round to most of these.