Machine learning is a fascinating topic. It has so much potential yet very few people talk about using machine learning in production. I've been highlighting the need for this for over 20 years now and only a very small number of machine learning languages and solutions are suitable for production use. Why? maybe it is due to the commercial aspects and as many of the languages and tools are driven by the open source community, one of the last things they get round to focusing on is production deployment. Rightly they are focused at developing more and more machine learning algorithms and features for developing models, but where the real value comes is will being able to embed machine learning model scoring in production system. Maybe this why the dominant players with machine learning in enterprises are still the big old analytics companies.

Yes that was a bit a of a rant but it is true. But over the summer and past few months there has been a number of articles about production deployment.

But this is not a new topic. For example, we have Predictive Model Markup Language (PMML) around for a long time. The aim of this was to allow the interchange of models between different languages. This would mean that the data scientist could develop their models using one language and then transfer or translate the model into another language that offers the same machine learning algorithms.

But the problem with this approach is that you may end up with different results being generated by the model in the development or lab environment versus the model being used in production. Why does this happen? Well the algorithms are developed by different people/companies and everyone has their preferences for how these algorithms are implemented.

To over come this some companies would rewrite their machine learning algorithms and models to ensure that development/lab results matched the results in production. But there is a very large cost associated with this development and ongoing maintenance as the models evolved. This would occur, maybe, every 3, 6, 9, 12 months. Somethings the time to write or rewrite each new version of the model would be longer than its lifespan.

These kind of problems have been very common and has impacted on model deployment in production.

In the era of cloud we are now seeing some machine learning cloud solutions making machine learning models available using REST services. These can, very easily, allow for machine learning models to be included in production applications. You are going to hear more about this topic over the coming year.

But, despite all the claims and wonders and benefits of cloud solutions, it isn't for everyone. Maybe at some time in the future but it mightn't be for some months or years to come.

So, how can we easily add machine learning model scoring/labeling to our production systems? Well we need some sort of middleware solutions.

Given the current enthusiasm for neural networks, and the need for GPUs, means that these cannot (easily) be deployed into production applications.

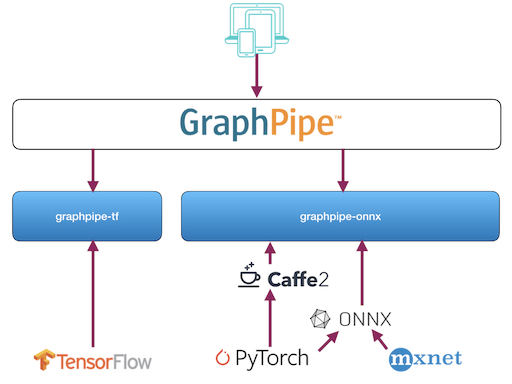

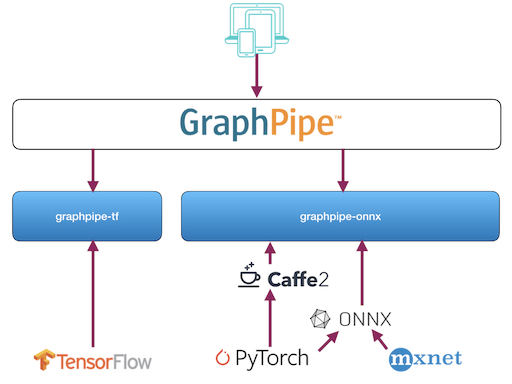

There have been some frameworks put forward for how to enable this. Once such framework is called Graphpipe. This has recently been made open source by Oracle.

Graphpipe is a framework that to access and use machine learning models developed and running on different platforms. The framework allows you to perform model scoring across multiple neural networks models and create ensemble solutions based on these. Graphpipe development has been focused on performance (most other frameworks don't). It uses flatbuffers for efficient transfer of data and currently has integration with TensorFlow, PyTorch, MXNet, CNTK and via ONNX and caffe2.

Expect to have more extensions added to the framework.

Graphpipe website

Graphpipe getting started

Graphpipe blogpost

Graphpipe download

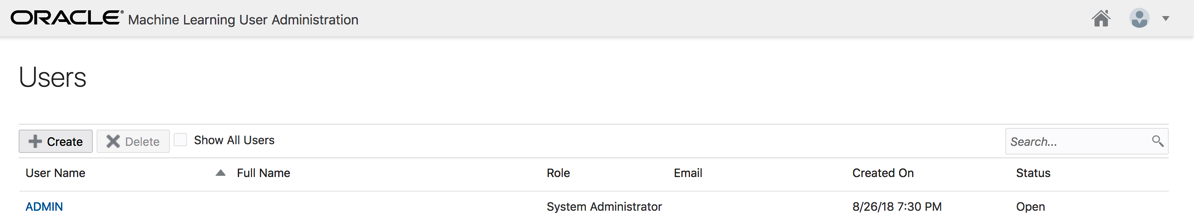

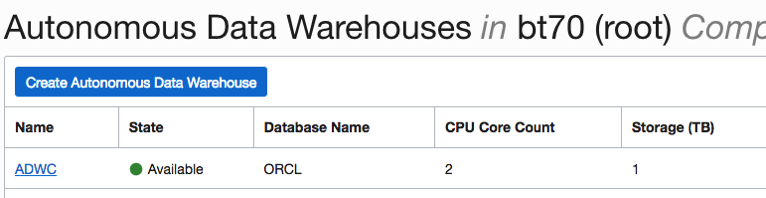

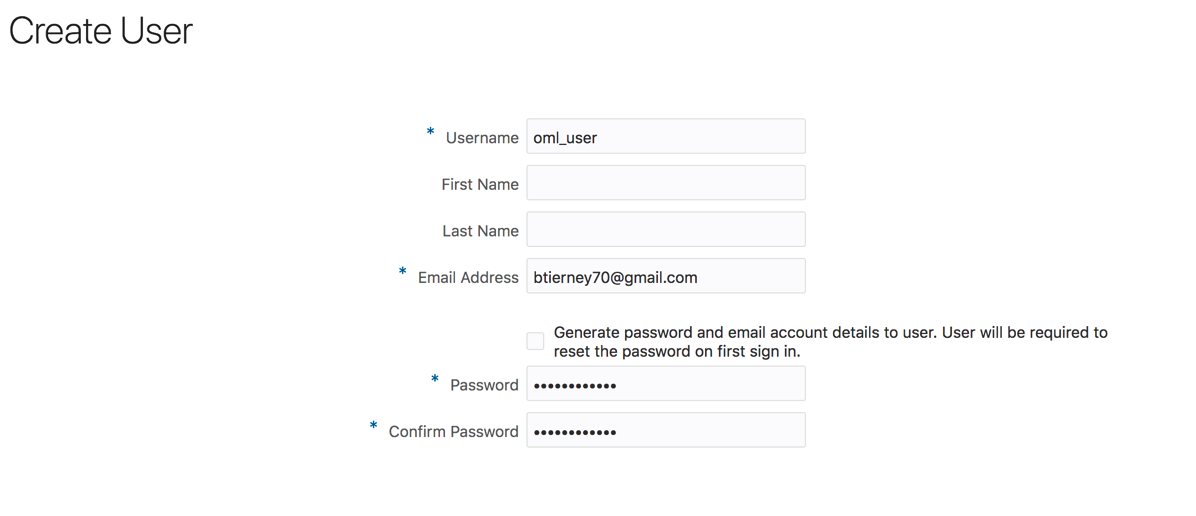

Click the Create button.

And hopefully the user will receive the email. The email may take a little bit of time to arrange in the users email box!

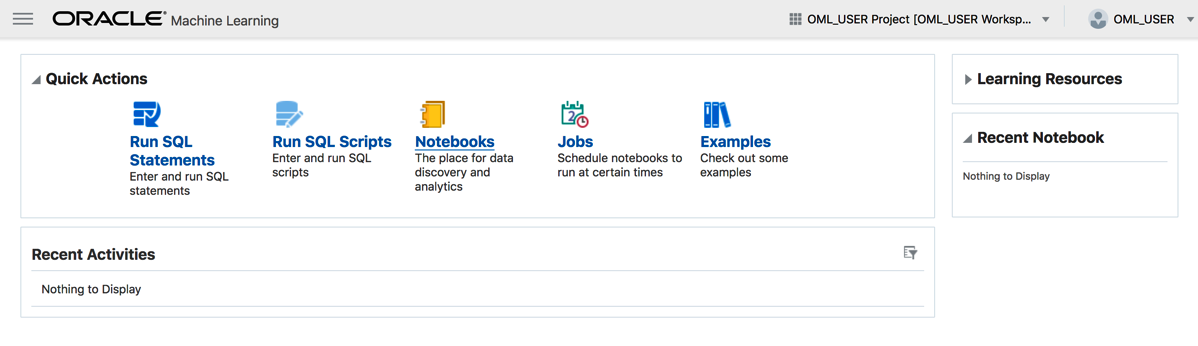

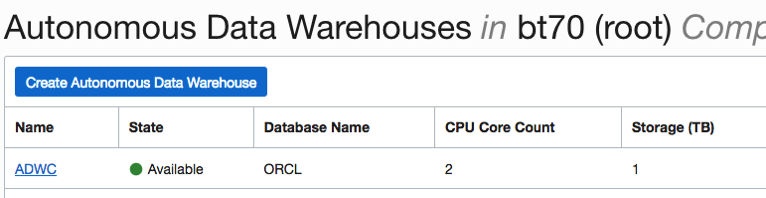

Click the Create button.

And hopefully the user will receive the email. The email may take a little bit of time to arrange in the users email box!